Logging with Loki and Grafana in Kubernetes

You already know the most important building blocks for starting your application from our Tutorial-Serie. Are you still missing metrics and logs for your applications? After this blog post, you can tick off the latter.

Logging with Loki and Grafana in Kubernetes – an Overview

One of the best-known, heavyweight solutions for collecting and managing your logs is also available for Kubernetes. This usually consists of Logstash or Fluentd for collecting, paired with Elasticsearch for storing and Kibana or Graylog for visualising your logs.

In addition to this classic combination, a new, more lightweight stack has been available for a few years now with Loki and Grafana! The basic architecture hardly differs from the familiar setups.

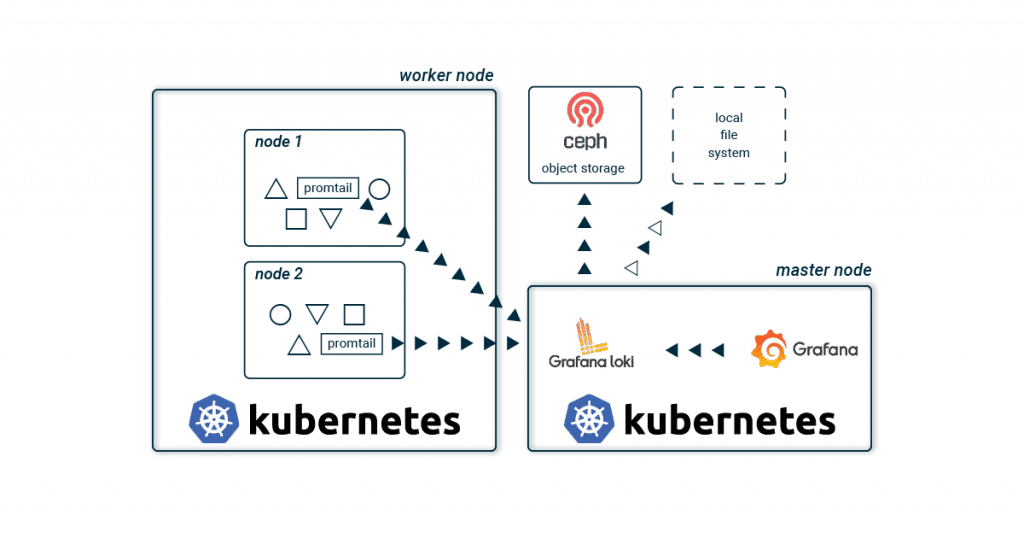

Promtail collects the logs of all containers on each Kubernetes node and sends them to a central Loki instance. This aggregates all logs and writes them to a storage back-end. Grafana is used for visualisation, which fetches the logs directly from the Loki instance.

The biggest difference to the known stacks is probably the lack of Elasticsearch. This saves resources and effort, and therefore no triple-replicated full-text index has to be stored and administered. And especially when you start to build up your application, a lean and simple stack sounds appealing. As the application landscape grows, individual Loki components are scaled up to spread the load across multiple servers.

No full text index? How does it work?

Of course, Loki does not do without an index for quick searches, but only metadata (similar to Prometheus) is indexed. This greatly reduces the effort required to run the index. For your Kubernetes cluster, Labels are therefore mainly stored in the index and your logs are automatically organised using the same metadata as your applications in your Kubernetes cluster. Using a time window and the Labels, Loki quickly and easily finds the logs you are looking for.

To store the index, you can choose from various databases. Besides the two cloud databases BigTable and DynamoDB, Loki can also store its index locally in Cassandra or BoltDB. The latter does not support replication and is mainly suitable for development environments. Loki offers another database, boltdb-shipper, which is currently still under development. This is primarily intended to remove dependencies on a replicated database and regularly store snapshots of the index in chunk storage (see below).

A quick example

A chunk therefore contains compressed logs of a stream and is limited to a maximum size and time unit. These compressed data records are then stored in the chunk storage.

Label vs. Stream

A combination of exactly the same labels (including their values) defines a stream. If you change a label or its value, a new stream is created. For example, the logs from stdout of an nginx pod are in a stream with the labels: pod-template-hash=bcf574bc8, app=nginx and stream=stdout.

In Loki’s index, these chunks are linked with the stream’s labels and a time window. A search in the index must therefore only be filtered by labels and time windows. If one of these links matches the search criteria, the chunk is loaded from the storage and the logs it contains are filtered according to the search query.

Chunk Storage

The compressed and fragmented log streams are stored in the chunk storage. As with the index, you can also choose between different storage back-ends. Due to the size of the chunks, an object store such as GCS, S3, Swift or our Ceph object store is recommended. Replication is automatically included and the chunks are automatically removed from the storage based on an expiry date. In smaller projects or development environments, you can of course also start with a local file system.

Visualisation with Grafana

Grafana is used for visualisation. Preconfigured dashboards can be easily imported. LogQL is used as the query language. This proprietary creation of Grafana Labs leans heavily on PromQL from Prometheus and is just as quick to learn. A query consists of two parts:

First, you filter for the corresponding chunks using labels and the Log Stream Selector. With = you always make an exact comparison and =~ allows the use of regex. As usual, the selection is negated with !

After you have limited your search to certain chunks, you can expand it with a search expression. Here, too, you can use various operators such as |= and |~ to further restrict the result. A few examples are probably the quickest way to show the possibilities:

Log Stream Selector:

{app = "nginx"}

{app != "nginx"}

{app =~ "ngin.*"}

{app !~ "nginx$"}

{app = "nginx", stream != "stdout"}

{app = "nginx"} |= "192.168.0.1"

{app = "nginx"} != "192.168.0.1"

{app = "nginx"} |~ "192.*"

{app = "nginx"} !~ "192$"

Further possibilities such as aggregations are explained in detail in the official documentation of LogQL.

After this short introduction to the architecture and functionality of Grafana Loki, we will of course start right away with the installation. A lot more information and possibilities for Grafana Loki are of course available in the official documentation.

Get it running!

You would like to just try out Loki?

With the NWS Managed Kubernetes Cluster you can do without the details! With just one click you can start your Loki Stack and always have your Kubernetes Cluster in full view!

As usual with Kubernetes, a running example is deployed faster than reading the explanation. Using Helm and a few variables, your lightweight logging stack is quickly installed. First, we initialise two Helm repositories. Besides Grafana, we also add the official Helm stable charts repository. After two short helm repo add commands we have access to the required Loki and Grafana charts.

Install Helm

brew install helm apt install helm choco install kubernetes-helm

You don’t have the right sources? On helm.sh you will find a brief guide for your operating system.

helm repo add loki https://grafana.github.io/loki/charts helm repo add stable https://kubernetes-charts.storage.googleapis.com/

Install Loki and Grafana

For your first Loki stack you do not need any further configuration. The default values fit very well and helm install does the rest. Before installing Grafana, we first set its configuration using the well-known helm values files. Save them with the name grafana.values.

In addition to the password for the administrator, Loki that has just been installed is also set as the data source. For visualisation, we import a dashboard and the required plugins. And hence you install a Grafana configured for Loki and can get started directly after the deploy.

grafana.values:

---

adminPassword: supersecret

datasources:

datasources.yaml:

apiVersion: 1

datasources:

- name: Loki

type: loki

url: http://loki-headless:3100

jsonData:

maxLines: 1000

plugins:

- grafana-piechart-panel

dashboardProviders:

dashboardproviders.yaml:

apiVersion: 1

providers:

- name: default

orgId: 1

folder:

type: file

disableDeletion: true

editable: false

options:

path: /var/lib/grafana/dashboards/default

dashboards:

default:

Logging:

gnetId: 12611

revison: 1

datasource: Loki

The actual installation is done with the help of helm install. The first parameter is a freely selectable name. With its help, you can also quickly get an overview:

helm install loki loki/loki-stack helm install loki-grafana stable/grafana -f grafana.values kubectl get all -n kube-system -l release=loki

After deployment, you can log in as admin with the password supersecret. To be able to access the Grafana Webinterface directly, you still need a port-forward:

kubectl --namespace kube-system port-forward service/loki-grafana 3001:80

The logs of your running pods should be immediately visible in Grafana. Try the queries under Explore and discover the dashboard!

Logging with Loki and Grafana in Kubernetes – the Conclusion

With Loki, Grafana Labs offers a new approach to central log management. The use of low-cost and easily available object stores makes the time-consuming administration of an Elasticsearch cluster superfluous. The simple and fast deployment is also ideal for development environments. While the two alternatives Kibana and Graylog offer a powerful feature set, for some administrators Loki with its streamlined and simple stack may be more enticing.

Recent Comments